Grubby AI is one of those tools that only exists because of a very 2025 problem: we wanted AI to write for us, then built more AI to catch it, and now we’re building yet another layer to disguise it again. It doesn’t promise to be your muse; it promises to be your cleaner.

Think of Grubby AI as a post‑processor for AI text. You don’t start with it; you arrive at it when your original AI draft looks just a bit too robotic for comfort.

At its core, Grubby is a browser‑based AI humanizer that takes AI‑generated text and rewrites it to sound more like a human wrote it and less like a language model stitched it together in 0.7 seconds. It is built for people who are already using ChatGPT, Claude or similar tools but who know that raw AI output tends to trigger both detectors and human suspicion.

The target users are not subtle: students trying to get assignments past Turnitin, bloggers and SEO writers who don’t want their posts to scream “LLM,” freelancers and agencies who want to move fast without having every paragraph smell like the same AI template. Grubby leans into that reality and openly markets itself as an AI detection bypasser that also polishes style along the way.

Strip away the landing page promises, and Grubby is doing something quite technical but conceptually simple: it tries to break the patterns that both detectors and humans associate with AI writing.

You paste your text in, choose a mode and hit a button. Under the hood, several things happen:

● Sentence structures are reshuffled (shorter/longer alternations, different clause ordering).

● Word choices are swapped out to reduce repetitive phrasing and generic AI vocabulary.

● Punctuation and rhythm change to create less uniform sentence cadences.

The intent is not to add random errors but to inject the small inconsistencies and variations that human writing naturally has, while maintaining the original meaning as much as possible.

Grubby’s most controversial feature is its detector‑tuned presets. Instead of a single “rewrite” mode, you get options crafted around specific tools:

● GPTZero‑optimized mode

● Turnitin‑oriented or “academic” mode

● ZeroGPT‑oriented mode

● General humanization for blogs and casual content

Each mode tweaks the rewrite strategy in ways that have tested better against those detectors in Grubby’s own experiments and in some third‑party tests.

Grubby is deliberately lean. It does not:

● Run its own AI detection (you have to copy the result into separate detectors).

● Do plagiarism checking itself.

● Guarantee evasion against any detector, old or new—no matter what its marketing copy implies.

You can think of it as a middle filter in a larger pipeline, not an all‑in‑one safety net.

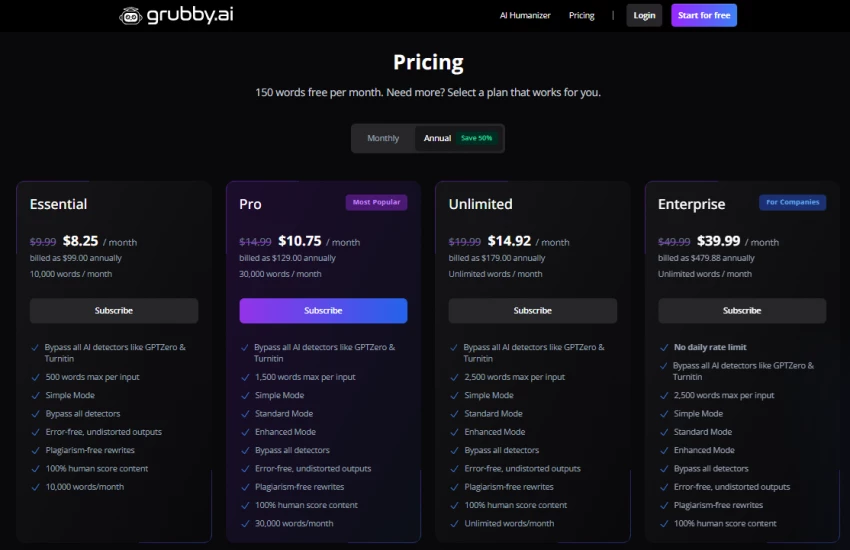

Like most AI SaaS tools, Grubby’s business model starts with a taste and escalates into tiers.

Most serious reviews converge on one detail: there is a genuine free tier with a very small monthly word allotment about 300–400 words per month in many analyses. Some directories quote 400–500 words; some mention 300 words plus occasional promotional “airdrops” of extra credits.

That free tier is enough to:

● Test the interface and basic humanization

● Run one short essay or a couple of emails through it

● See how it behaves with your own detectors and workflows

It is not enough to cover a student’s full semester or a blogger’s content calendar.

Beyond the free plan, pricing splits into multiple tiers. Depending on the source and time of capture, the numbers shift slightly, but the structure repeats.

Grubby does not demand patience. That’s part of its appeal.

You sign up with an email (and sometimes get bonus credits for verifying). When you log in, there’s no maze of menus—just a central text box, mode selector, optional tone controls and a big, obvious “humanize” button.

1. Generate text in your favorite AI tool.

2. Paste or upload it into Grubby.

3. Choose a mode (detector‑oriented or general).

4. Hit humanize.

5. Wait a few seconds; inspect the new text.

6. Copy it out, edit manually, then run it through your own detectors and plagiarism tools.

For shorter texts, the process feels near‑instant. For long documents, there’s a few seconds of processing time and sometimes more noticeable lag during peak usage.

Some plans also expose additional tools around the main humanizer:

● Summarizer for condensing text

● Smart notes, quizzes and flashcards for study workflows

These add value for students and educators, but for most content creators, the humanizer remains the star of the show.

This is where marketing meets math. To understand Grubby’s real performance, you have to look at independent tests, competitor analyses and raw user feedback.

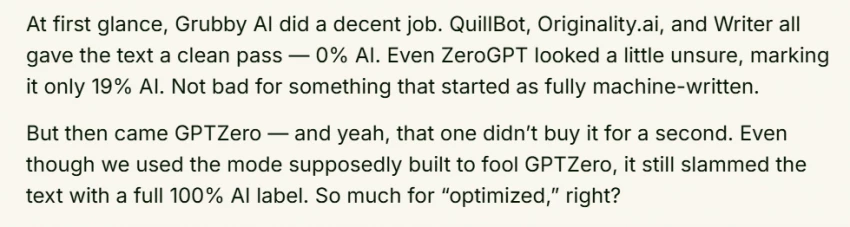

Multiple reviewers have stress‑tested Grubby against AI detectors like GPTZero, Originality.ai, Turnitin‑backed checkers and ZeroGPT.

The overall picture is mixed:

● Some tests show dramatic drops in AI probability for certain tools for example, text falling from near‑100% AI to low double digits after humanization.

● EssayDone’s documented test showed one humanized sample reading as 0–1% AI on some detectors and only 19% AI on ZeroGPT but still flagged 100% AI on GPTZero.

● Originality.ai’s own review concluded that while Grubby can reduce some detectors’ confidence, advanced detection models like Originality.ai still identify the content as AI with high reliability.

Competitor GPTHumanizer’s extensive testing placed Grubby’s average “naturalness” improvement in the ballpark of 61.56% across its metrics, explicitly calling out that this falls well short of what serious content creators should accept. That same analysis includes examples where Grubby lowered AI‑detection scores—but not reliably enough for high‑stakes use.

On the readability front, Grubby does more consistently positive work:

● AI‑generated drafts become less repetitive, less flat and slightly more varied in rhythm.

● For short, non‑technical pieces like emails, basic blog intros, short essays, the humanized output is often described as “good enough with some editing.”

● For complex or technical topics, reviewers report awkward phrasing, distorted meaning and the need for careful manual corrections.

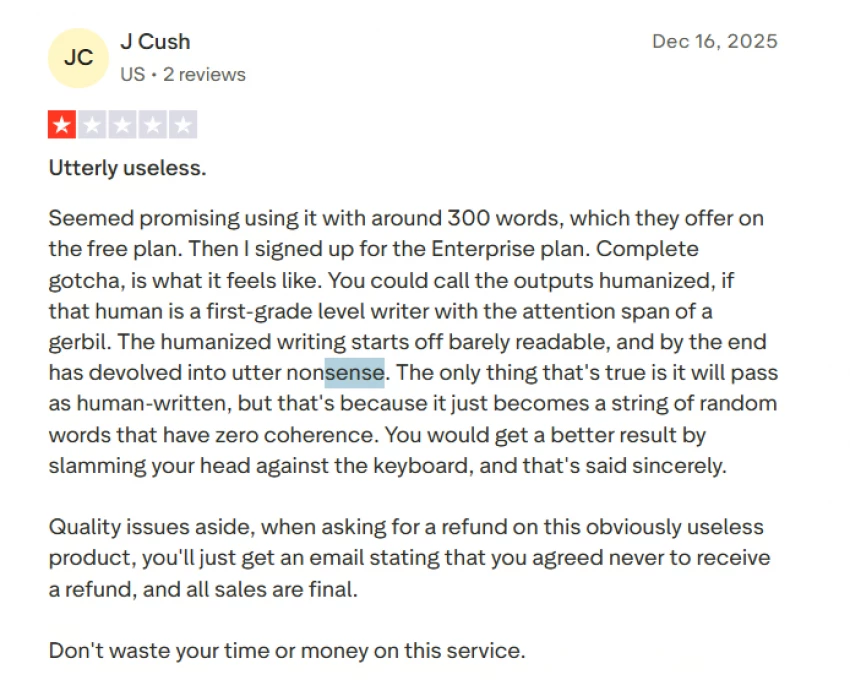

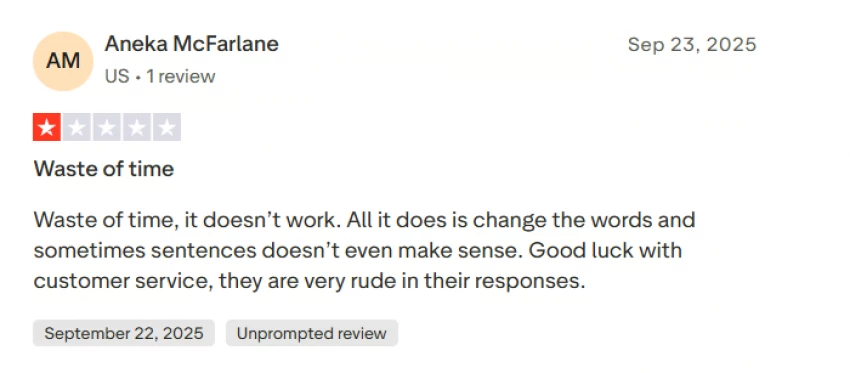

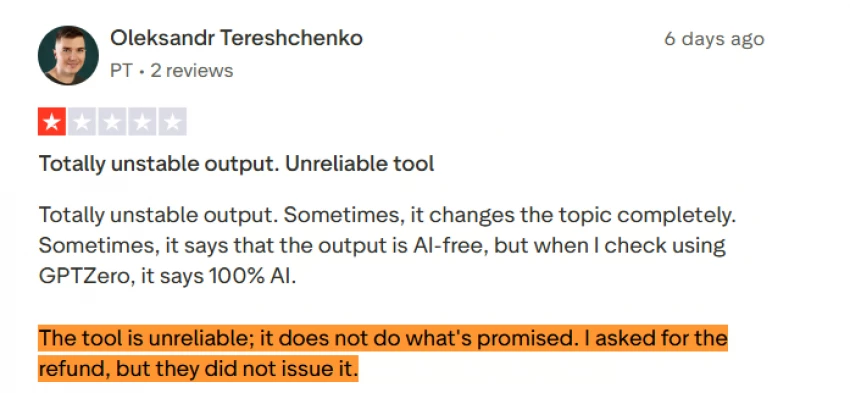

One negative Trustpilot review complains that Grubby “just changes the words and sometimes sentences don’t even make sense,” calling the writing “robot sounding.” Others echo this, saying they cancelled after seeing that both versions still got flagged as AI and that the rewriting distorted intent.

On the flip side, there are also users who say they never got detected by professors when using Grubby and that it “does exactly what it says” for them showing that results can vary depending on detector, assignment and text type.

If you zoom out from individual anecdotes, a pattern of strengths and weaknesses emerges.

● Low friction: Clean web interface, minimal onboarding and no need for installation or extensions.

● Free tier for testing: Even with tiny limits, you can try real workloads before committing.

● Detector‑aware presets: Targeting GPTZero, Turnitin or ZeroGPT modes gives users a feeling of control around which “enemy” they’re optimizing for.

● Noticeable readability improvement on simple content: For everyday text, Grubby often nudges drafts noticeably closer to “humanish.”

● No universal detector evasion: GPTZero, Originality.ai and other modern systems can still identify Grubby‑processed content as AI‑generated.

● Inconsistent results: The same piece might pass one detector and fail another, which is risky if you don’t know what your institution or client is using.

● Quality ceiling: For advanced or domain‑heavy writing, output can feel distorted, clumsy or “washed,” demanding serious manual editing.

● Limited free usage: The free tier is more like a demo than a sustainable plan for regular writers.

● Missing in‑house checks: No built‑in AI detector or plagiarism checker forces you to rely on extra tools, adding friction and cost.

Beyond test labs, Grubby’s reputation is being shaped in places like Trustpilot, Reddit and long‑form reviews from independent bloggers.

Trustpilot snapshots show a mid‑range rating (around 3.3/5 in one detailed analysis), with a mix of glowing and scathing reviews.

Common praise:

● “Easy to use and very beneficial.”

● “Top tier… helps speed up homework and I never got detected by a professor.”

Common complaints:

● “Waste of time, it doesn’t work… sentences don’t make sense.”

● “Robot sounding writing not usable; still flagged as AI even after both versions.”

● Frustration with cancellations and refunds; some users say they were charged after trying to cancel early.

On Reddit and similar forums, sentiment is similarly divided:

● Some users call it “okay for simple stuff” and say it helps them get away with light AI usage in low‑stakes contexts. reddit

● Others portray it as overhyped, overpriced and ineffective when tested against robust detectors.

GPTHumanizer’s meta‑review is particularly critical, arguing that Grubby fails to deliver on its core promise and that better alternatives exist for serious content creators.

Tools like Grubby sit right in the collision zone between technology and rules.

Universities and schools are increasingly explicit: using AI without disclosure, or using tools specifically to bypass AI detection, can amount to academic misconduct. Even if a Grubby‑processed essay passes a detector today, that doesn’t change the fact that many institutions see this as cheating.

The risk is simple:

● If caught, penalties can be severe ranging from failed assignments to suspension or expulsion.

● Detectors evolve and may be re‑run retroactively on old submissions, especially in high‑profile or disputed cases.

Companies are also rewriting internal policies around AI. Some demand disclosure when AI is involved; others restrict its use in sensitive work (legal, medical, financial, safety‑critical).

Using Grubby to deliberately conceal AI involvement in content that’s supposed to be human‑authored can clash directly with:

● Employment contracts

● Agency agreements

● Platform content policies

Even if no detector is ever run, misrepresentation can create legal and reputational fallout.

The responsible framing is this: Grubby is safe as a style and clarity helper for drafts you own and are allowed to edit however you want; it becomes ethically and professionally dangerous when used primarily as a cloak.

Grubby doesn’t exist in a vacuum. The “AI humanizer” niche has become its own mini‑industry, and many tools compete for the same users, each with a slightly different emphasis.

Here’s a concise positioning snapshot:

For someone who writes professionally at scale, several reviewers recommend skipping Grubby in favour of tools that deliver more consistent quality or bundle detection and plagiarism checking into a single workflow. For someone looking for a simple, cheap humanizer with a free test tier, Grubby remains a realistic contender.

If you think of Grubby AI as a miracle cloak that makes AI writing truly undetectable, it fails. The evidence is just too clear: serious detectors still catch it, quality is inconsistent, and user disappointment is well‑documented.

If you think of it as a practical, reasonably priced text filter that:

● Makes AI drafts less robotic

● Sometimes nudges detection scores down on specific tools

● Gives you a cleaner starting point before you edit by hand

it starts to make a lot more sense.

Grubby AI is best used as:

● A stylistic assistant for low‑ to medium‑stakes content

● A way to quickly “de‑AI‑ify” tone and rhythm before human editing

● One piece of a larger writing workflow that still includes detectors, plagiarism tools and your own judgment

It is not:

● A reliable way to cheat on exams or bypass strict academic policies

● A compliance solution for regulated industries

● A substitute for real editorial skill when quality truly matters

In a world where AI now writes, detects and rewrites its own footprints, Grubby AI plays the role of a quiet in‑between layer useful, sometimes clever, occasionally frustrating, and absolutely not magic.

Grubby AI is a useful but limited humanizer: it can make AI‑written drafts sound more natural and sometimes lower detection scores, but it does not reliably beat modern detectors and often still needs careful human editing and ethical use.

Discussion