A student opens their laptop in exam season, looks at the stack of PDFs, slides, saved YouTube links and half‑finished notes and quietly panics. Jungle AI inserts itself exactly at that moment and offers a bargain: “Give me your chaos, and I’ll give you questions.”

This is not another pretty to‑do list or minimalist note app. Jungle is unapologetically about interrogation. It takes what you already have, turns it into flashcards and quizzes, then keeps bringing those questions back until you’ve answered them enough times to remember.

Strip away the animals and the trees and the soft colours, and Jungle’s core identity is simple: an AI‑driven study platform that automates the ugliest part of effective learning turning content into active‑recall prompts.

You upload your materials (PDFs, lecture slides, Word docs, web pages, YouTube lectures), Jungle’s models read them, and out come flashcards, multiple‑choice questions, true/false items, and short‑answer prompts. Those questions are slotted into a spaced‑repetition engine so that the hard stuff comes back more often, the easy stuff slowly fades, and you spend less time organising and more time answering.

The tool is clearly built for exam‑oriented students: undergrads with overloaded syllabi, medical and law students buried in dense content, and competitive‑exam aspirants who don’t have the luxury of building handcrafted flashcards for every topic. Jungle AI doesn’t try to be a general productivity system. It tries to be the place you go when you have content and need questions, now.

The first session with Jungle doesn’t feel like configuring software; it feels like checking into a programme.

Account creation is quick, but before you can wander around aimlessly, the app asks what you’re studying and what’s coming up. Exams, subjects, timelines, this is not decoration. Jungle wants to frame everything you do in terms of concrete outcomes. You pick an animal avatar, land in a stylised jungle interface, and from that moment your progress is visualised as a landscape that grows with your work.

The real “oh” moment comes when you drop in your first file. Take, for example, a 30‑page PDF chapter on renal physiology. You upload it, Jungle churns for a bit, and then presents a sequence of generated cards and questions. Before you even think about “deck management,” it pushes you to start a session: ten questions, low stakes, immediate feedback.

What stands out in that first run is the tone. Correct answers get small, affirming animations. Wrong answers don’t trigger punishment; they trigger explanations and a link back to the exact paragraph in the PDF. At the end, instead of a flat “You scored X%,” you see a tree that has grown a little taller and a suggestion: “We’ll revisit the tricky topics tomorrow.”

There’s a subtle design decision here. Jungle doesn’t want to be another app you configure and abandon. It wants to feel like a guided routine that you can drop into with minimal friction, especially on days when motivation is low.

Once the first‑time novelty wears off, the question becomes: is Jungle’s AI any good at actually understanding your materials?

The ingestion pipeline handles a familiar list of formats: standard documents, slides, notes, web articles, and videos. Under the hood, the system breaks these inputs into chunks of concepts, definitions, sequences, comparisons, cause‑effect pairs then tries to decide which of those are worth turning into questions.

When you feed Jungle AI a well‑written textbook chapter, the results are impressive. Definitions become clean front‑back flashcards. Conceptual sections turn into “which of the following is true” or “what happens when” MCQs. Lists produce questions that ask you to identify missing elements or exceptions. You get the feeling that a competent teaching assistant has gone through and extracted the obvious examinable points.

The illusion breaks slightly with more chaotic inputs. Lecture slides that contain three words and an unexplained diagram give the model very little to work with. It still produces questions but some will be vague or oddly phrased, forcing you to curate. In these situations, Jungle behaves less like a magic exporter and more like a rough draft generator: it will give you something, but you are responsible for cleaning it up.

The same is true for YouTube lectures. When auto‑captions are clean and the lecturer is structured, Jungle creates solid, time‑anchored questions and lets you jump straight back to the relevant timestamp. When captions are full of errors, the quality of the questions drops. Jungle can’t fix a mumbling professor.

What matters is that you always retain editorial control. After generation, you can skim through the cards and questions, delete what feels off, and tweak the wording of anything ambiguous. The AI saves you from starting with a blank page, but you remain the final arbiter of quality which, for serious exams, is exactly how it should be.

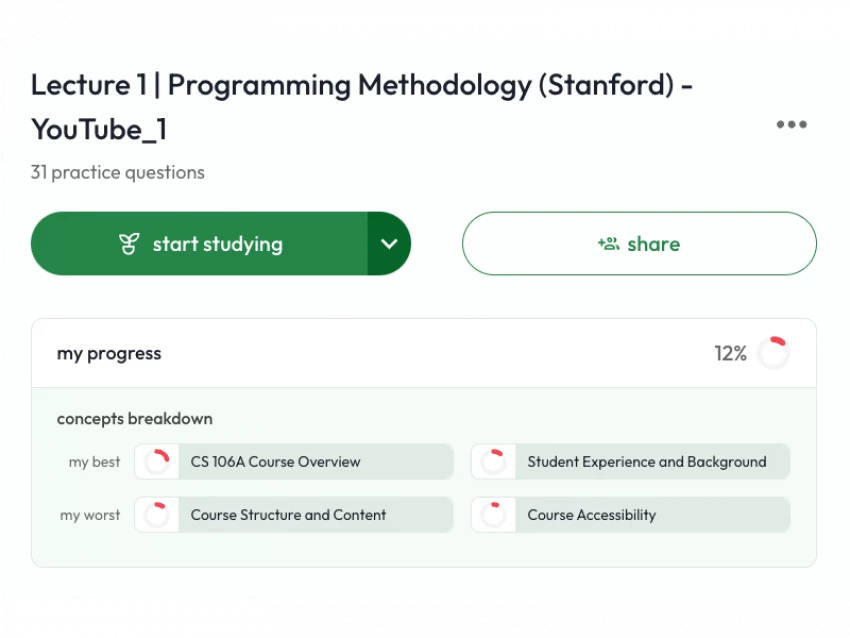

If ingestion is the engine, the daily session is the driver’s seat. Jungle lives or dies on how it structures your interaction with those questions over time.

A typical session pulls in a mix of formats. You might start with a recall‑style flashcard (“What is the definition of X?”), move into a multiple‑choice item that tests nuances, bounce through a couple of true/false statements for speed, and land on a short answer question that forces you to articulate a process in your own words.

Each answer leads to a small fork. If you’re correct and confident, the explanation is quick and confirms your understanding. If you’re wrong, Jungle leans on retrieval plus context: it shows the correct answer, provides a short reasoning or summary, and offers a button to jump straight to the original source segment. You are never left with a bare “wrong”; the app always points you to where you can repair the misconception.

Spaced repetition is largely invisible. You don’t fiddle with algorithms or card intervals. Instead, Jungle tracks your performance, tags items internally as strong or weak, and decides when to resurface them. Difficult concepts come back more often, easy ones less. Old content never disappears completely, but the frequency adjusts as you prove yourself.

This approach has two consequences. First, it dramatically lowers the barrier to entry for students who have heard of spaced repetition but never wanted to deal with its mechanics. Second, it removes the sense of “deck micromanagement” that can turn Anki into a part‑time job. You show up, push through the set Jungle has prepared, and trust the system to handle scheduling.

The trade‑off is obvious: you give up meticulous control. You can’t hand‑craft intervals, suspend individual cards for months, or tune the scheduling formula. Students who love squeezing every last percent out of SRS will feel constrained. For everyone else, the simplification is a feature, not a bug.

On paper, gamification sounds like fluff. In practice, for stressed students, it often decides whether an app is opened at all.

Jungle’s aesthetic is deliberately soft. You’re not presented with cold statistics and red overdue counters; you’re shown a landscape that grows when you do the work. Your avatar gains subtle upgrades as you maintain streaks. Trees and plants respond to your efforts, giving a quiet sense of stewardship over your own progress.

The copy mirrors this mood. Jungle does not yell about streaks or shame you with “You missed 3 days!” notifications. When you disappear, it invites you back with specific, manageable offers: “We’ve prepared a short review for Topic X to help you pick up where you left off.” When you struggle with certain concepts, the language frames them as “still in progress,” not as failures.

This design philosophy matters for the audience Jungle is courting. Many serious exam takers are already living under a heavy cloud of pressure from parents, institutions, future careers. They don’t need their study tool to be another judge. They need it to be a structured environment that recognises effort, catches mistakes early, and doesn’t punish imperfection.

Does this risk turning learning into a game of growing trees rather than understanding material? It could, if the underlying questions were shallow. But because Jungle keeps tying questions back to original sources and explanations, the gamification sits on top of a reasonably robust learning process rather than substituting for it.

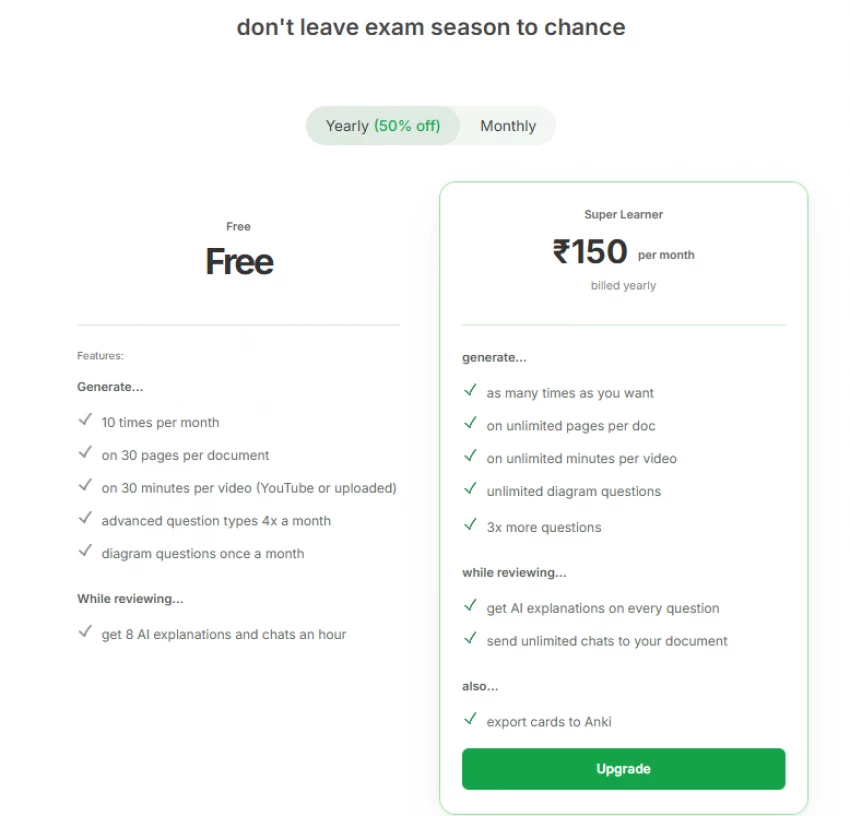

The economic question around Jungle is straightforward: does it save you enough time and cognitive friction to justify a monthly fee?

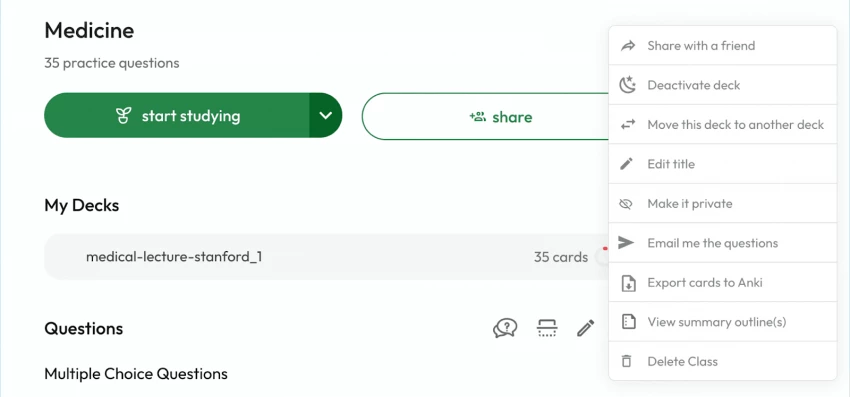

The free tier gives you a taste: a limited number of AI generations per month, caps on document length and video duration, and restrictions on how many advanced questions or AI explanations you can invoke. For an occasional course or a light semester, this may genuinely be enough. You can throw in a chapter here, a lecture there, and use Jungle as an “on demand quiz maker” without paying.

As soon as you start preparing seriously for multiple subjects, large volumes, long videos, you will feel the walls closing in. That is where the paid “Super Learner” plan steps in. For the price of a couple of coffees a month (less if you pay annually), it effectively removes or greatly raises the ceilings. You can process bigger files, generate more questions per unit of content, lean heavily on AI explanations and chat, and export your cards into other systems like Anki.

Think of it this way: manual card creation for a heavy course load can easily eat five to ten hours a week if you do it properly. If Jungle can compress most of that into one or two hours of generation and curation, and free the rest for actual recall practice, the value proposition becomes obvious, especially for fields where failing an exam is far more expensive than a small subscription.

The only group for whom the price feels unnecessary are students who either study sporadically or already enjoy the ritual of building cards by hand in a tool they’ve mastered. For them, Jungle’s automation is nice‑to‑have rather than essential.

No study app exists in isolation, and Jungle certainly doesn’t. It arrives in a world where Quizlet and Anki already dominate their respective niches.

Quizlet is the familiar face in classrooms, known for its huge library of shared decks and simple, teacher‑friendly interface. It does offer AI features at higher tiers, but the core experience is still centred on manually created sets or public decks. It’s great when your teacher has already built the deck for you; less great when you have to build everything yourself.

Anki lives at the other end of the spectrum. It is the tool of choice for students who treat spaced repetition like a craft. You define card types, tweak scheduling parameters, install plugins, and build intricate hierarchies of decks and tags. The payoff is immense control and efficiency; the cost is a steep learning curve and the ongoing labour of writing cards.

Jungle advances a different thesis: what if the AI did the majority of the drafting, the system handled the scheduling, and your main job was to show up, prune, and answer?

Look at it through that lens and the positions clarify. Jungle is not trying to dethrone Anki for people who enjoy that level of control. Instead, it tries to capture the large cohort of students who know they “should do active recall with SRS” but never get past step one because cards and intervals feel like admin.

Against other AI‑first flashcard tools, Jungle’s advantage is coherence. Many competitors can generate questions from text; fewer wrap that in a full learning loop with thoughtful UX, a calm tone, and a clear sense of progression. Jungle feels less like a demo of AI capabilities and more like a crafted product.

It’s easy to praise any tool in theory. The only meaningful test is what it does under exam pressure.

In a classic pre‑exam sprint two or three days before a midterm, Jungle’s strengths become obvious. You dump in chapters, slides, maybe even a key YouTube revision lecture. Instead of spending half the day extracting questions manually, you let Jungle draft them. You then do a quick pass: delete obviously weak items, improve wording where needed, and hit “study.” In that context, Jungle behaves like a tireless question author that lets you behave like a reviewer and test‑taker.

Over a multi‑week window, the spaced repetition takes over. As you feed it more content, Jungle interleaves older topics with new ones, reinforcing connections and preventing earlier units from decaying. Your sessions start to feel like revisiting a map: each time, a different part of the jungle lights up, but you never fully lose sight of the parts you’ve already covered.

The messy‑input scenario is where your expectations must be realistic. If you’ve spent an entire semester collecting half‑legible screenshots from WhatsApp groups and scribbling incomplete notes, Jungle will still produce questions—but you will hit a ceiling on quality no matter how good the AI is. The tool can refine; it can’t invent context you never had. In such cases, the mere act of curating Jungle’s output may serve as a wake‑up call about your upstream habits.

Across these stress tests, one conclusion is hard to escape: Jungle amplifies good habits and material. It can’t fix a broken study ecosystem, but it can turn a decent one into something much more efficient.

Any AI‑enhanced study platform lives with three unavoidable critiques.

The first is accuracy. Jungle is not a fact‑checker; it’s a transformer. If your PDF misstates a formula or your lecturer uses outdated guidelines, the resulting questions will faithfully echo those errors. In critical domains, this means you still need authoritative sources and, where possible, cross‑checking against official material. Jungle’s usefulness doesn’t remove your responsibility to verify.

The second is depth. Multiple‑choice and short‑form questions can give the illusion of mastery long before it’s real. Jungle makes that format extremely easy to consume. If you never move beyond tapping options and reading short explanations, you may end up with a shallow, exam‑specific understanding. The healthiest way to use Jungle is as a strong retrieval layer sitting on top of deeper work: problem sets, essays, labs, simulations.

The third is data. Your materials are being uploaded and processed on someone else’s servers. For most students, this means standard textbooks and lecture slides—not particularly sensitive. But anyone dealing with internal documents, patient data, or proprietary information needs to read the fine print, anonymise where possible, or keep certain documents offline.

Trust, in this context, is not blind faith that the app is perfect. It’s confidence that you understand what Jungle does well, where it’s fallible, and where you must remain in charge.

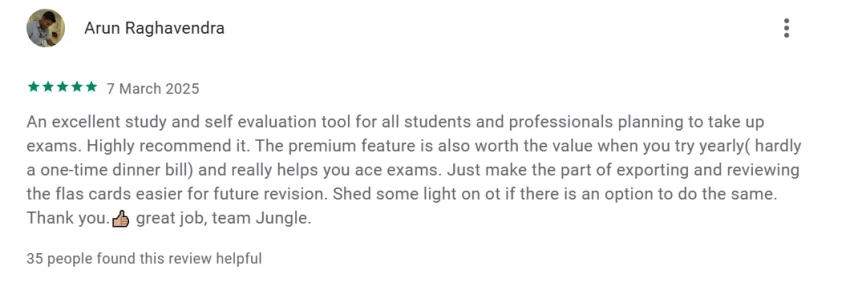

Students’ own feedback tends to cluster around a few clear points.

● It generates quizzes, flashcards, and MCQs from PDFs, slides, YouTube and other files in seconds, so they don’t have to build everything manually.

● The AI doesn’t just quiz them; it also explains concepts, creates notes, and offers personalised analogies, which many reviewers say makes learning “so much nicer” and easier to understand.

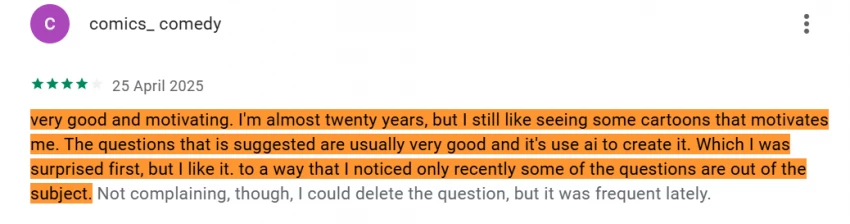

● Daily spaced‑repetition reviews and progress tracking (the growing jungle tree, cartoon animals) are described as “very good and motivating,” even by older students coming back to school.

● Users highlight that it helps them actually apply learning‑science ideas (like spaced repetition) without having to configure anything complicated.

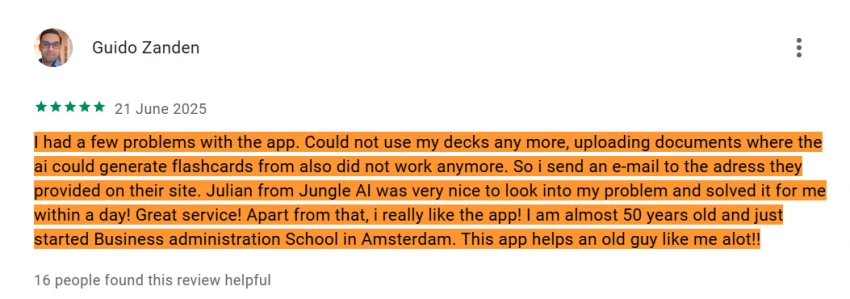

● Several reviewers praise the support: when decks or uploads stopped working, they emailed the team and had the issue fixed within a day, calling it “great service.”

● Some note that the AI doesn’t always recognise that two phrases mean the same thing, or that “some of the questions are out of the subject,” so they occasionally need to delete or tweak generated items.

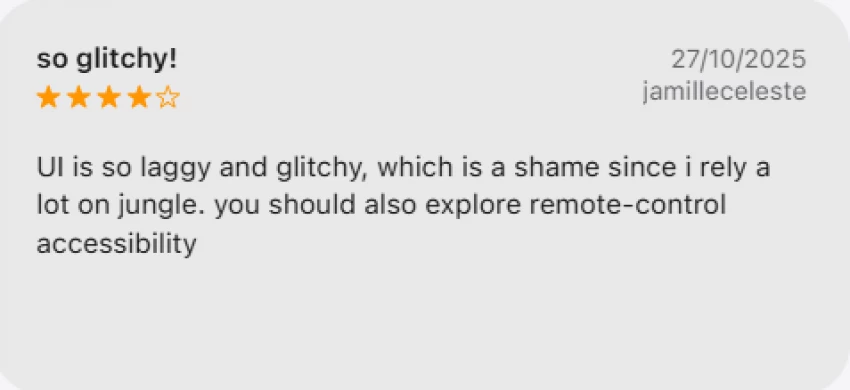

● A few users report glitches: decks temporarily unusable, uploads failing, or general bugs—often around the time they upgraded—though many of these are followed by a dev response and a fix.

● There are hints that, as generation has grown more powerful, off‑topic questions have become “more frequent lately” for some users, especially with weaker source material.

Taken together, the pattern is clear: students see Jungle as a very helpful, time‑saving study machine with rough edges around bad inputs and some technical or feature gaps, rather than as a flawless replacement for every other tool.

If we strip this review down to personas, Jungle’s “core fan” is easy to spot.

They are typically juggling multiple subjects, each with heavy reading loads. They understand that active recall and spaced repetition are powerful but have never managed to build and maintain a full card system by hand. Their main pain is not ignorance of learning science; it’s the grind of implementing it consistently.

For them, Jungle is a relief. It automates the tedious bits, wraps them in a friendly interface, and nudges them into daily habits. Instead of surviving on last‑minute re‑reading, they actually get weeks of question‑based practice—with far less setup overhead than an Anki empire.

On the other side, there’s the student who loves control. Their decks are already meticulously tagged, their Anki settings tuned, their plugins curated. For them, Jungle is less a replacement and more a helper: a fast way to draft questions from new material that they might later export and refine in their existing system. They will appreciate the speed but chafe at the guardrails.

And then there’s the casual learner: someone who occasionally uses Quizlet sets for a language or a hobby subject. Jungle can work for them, but it’s arguably overkill unless they’re about to enter a high‑stakes exam season.

A study app promises to “revolutionise learning.” Most don’t. At best, they rearrange your tasks or give you nicer timers.

Jungle AI’s claim is more modest and, in many ways, more interesting. It doesn’t promise to make you smarter. It promises to stop wasting your limited time on the lowest‑value part of good studying: turning rich content into testable questions and tracking when to revisit them.

When used seriously with good inputs, some editorial care, and a willingness to show up regularly, Jungle feels less like a gadget and more like an extra pair of hands in your study life. It won’t sit your exam. It won’t read the textbook for you. But it will relentlessly turn what you read into questions, and keep putting those questions in front of you until the material sticks.

For the majority of exam‑driven students who know what they should be doing but keep getting stuck in the admin of studying, that’s not a gimmick. It’s an upgrade.

Discussion