Muke AI is an AI‑powered image manipulation suite best known for two things: undressing people in photos (nudify/deepnude‑style) and hyper‑realistic AI face swaps for photos and videos. It is available through multiple branded endpoints such as “Undress AI” and “Face Swap,” often positioned as free, instant, browser‑based tools for anyone with an internet connection.

Beyond the NSFW angle, some sources and satellites around the brand also present “Muke AI” as a more general creative assistant for marketers and content creators offering AI‑generated visuals, prompts, and content workflows. That dual identity is at the heart of why this ecosystem is so polarizing: it mixes legitimate creative tooling with technologies that can be weaponized for deepfake porn and harassment.

When you say “Muke AI,” you are not talking about a single monolithic product. You’re talking about a cluster of related tools that share a common branding and underlying idea: using AI to manipulate human images.

This is the most controversial part of the ecosystem. Undress AI tools under the Muke umbrella are built to digitally remove or alter clothing in images. Key traits:

● One‑click “clothes remover” or “deepnude” style operation.

● Modes like “AI Clothes Remover,” “Sexy Portrait,” or “Lingerie/Bikini” variants.

● Targeted at adult/NSFW scenarios, but technically usable on any photo, which is where the risk explodes.

The promise is simple but disturbing: upload a clothed image of a person, and the AI generates a version where they appear partially or fully nude.

The face swap side is a bit more mainstream, as it overlaps with meme, cosplay, and entertainment use cases:

● Users upload a base image or video and a face image.

● The system detects faces and replaces them with the target face while preserving expressions, angles, and lighting as much as possible.

● Many versions promise fast processing (often seconds per image) with high‑resolution output.

This tool can produce harmless memes but also extremely realistic deepfakes if abused.

Around the core NSFW tools, there is also a broader narrative of “Muke AI” being:

● A text‑to‑image generator for marketing visuals, social media content, and blog illustrations.

● A creative assistant for ideas, captions, and campaigns.

● A simple, browser‑based platform aimed at creators, marketers, and small businesses.

This side of the ecosystem looks similar to mainstream AI design/creative tools except it co‑exists with undress and face‑swap functionality under the same or related branding, which raises a brand‑safety red flag for serious businesses.

In this section, we’ll go into the actual mechanics of what Muke AI does, rather than staying at the buzzword level.

How It Works (Conceptually)

From a user’s perspective, undress tools typically work in a few simple steps:

1. Upload a photo of a person—front‑facing or slightly angled shots work best.

2. Choose a mode: e.g., “bikini,” “lingerie,” or “full nude”; some tools present this as “Sexy Portrait” or “NSFW level.”

3. Let the AI process the image; within a few seconds, you get a new image where the clothing has been algorithmically removed or replaced.

Under the hood, the model is essentially hallucinating a plausible naked body based on shape, pose, and lighting. It is not “revealing” real hidden information; it is generating an illusion that appears real enough for harassment, blackmail, or humiliation.

Output Quality

Generally, modern nudify tools can:

● Produce convincing skin textures, shadows, and body contours.

● Adapt to different skin tones and lighting conditions.

● Occasionally struggle around edges—hands, hair, accessories, and complex clothing patterns can create artifacts or uncanny transitions.

For erotic art or fantasy images using synthetic or stock‑like bases, the results can be visually impressive. For real people, the realism is dangerous because viewers may not be able to distinguish AI‑generated nudity from reality at a glance.

Workflow & Usability

From a UX point of view, undress flows are usually:

● Browser‑based (no app installation required).

● Fast (seconds to process a single image).

● Sometimes accessible without an account, at least for low‑resolution or watermarked outputs.

● Monetized through credits, subscriptions, or pay‑per‑image, often with higher resolution and watermark removal behind a paywall.

The friction is intentionally low, which makes them more viral—but also easier to misuse impulsively.

Face swap technology is now everywhere, and Muke AI is riding that wave with:

Photo Face Swap

● You upload a “base” image that will keep the body/background.

● You upload a face image (your own or someone else’s).

● The AI detects the face(s) in the base and maps the new face with matching angle, expression, and color balance.

● In a good implementation, it preserves hair, background, and overall composition.

Video Face Swap

● Similar concept extended to video: the AI tracks the face across frames and applies the new identity.

● This enables meme videos, parody clips, and—on the darker side—pornographic deepfakes with a real person’s face.

Strengths and Weaknesses

Strengths:

● High realism when source images are clear and well‑lit.

● Automatic blending of skin tones and lighting.

● Reasonably fast processing for short clips and single images.

Weaknesses:

● Artifacts in low‑light or motion‑heavy scenes.

● Warped faces if angles don’t match.

● The better it gets, the worse the deepfake problem becomes socially.

On the “legitimate” side, Muke‑branded tools and related platforms often pitch:

● Text‑to‑Image: Turn prompts into marketing visuals, thumbnails, and stylized images.

● Content‑Aid: Generate ideas, captions, or simple copy blocks for campaigns.

● Workflow Simplification: Quick mockups for ecommerce, social ads, and banners without needing a full‑fledged design team.

If you isolate this piece from the undress/deepfake side, it looks like yet another AI creative assistant. The problem is the co‑branding and user journey: a creator exploring “Muke AI” will inevitably bump into NSFW offerings and deepfake capabilities.

Let’s look at Muke AI from a more practical, product‑manager lens: what does using it actually feel like?

Most Muke‑style tools stick to a very similar pattern:

● Clean, simple landing pages with big CTAs like “Try Undress AI Free” or “Start Face Swap Now.”

● Drag‑and‑drop upload area or “Upload Image/Video” buttons.

● Minimal fields to fill often no mandatory registration for a first try.

● NSFW disclaimers that may be present but are usually not prominent, especially compared to the promotional visuals.

In terms of usability, that’s great. In terms of safety and ethics, it’s the opposite: low friction plus high‑risk features is a dangerous mix.

Face swap and undress operations are typically quick:

● Undress images: usually a few seconds per image.

● Photo face swaps: similarly fast, especially on smaller resolutions.

● Video face swaps: can take longer depending on clip length and server load.

From a user’s perspective, this speed is a major appeal, it feels instant and playful. But the very same instant gratification is what encourages experimentation on people’s images without thinking through the consequences.

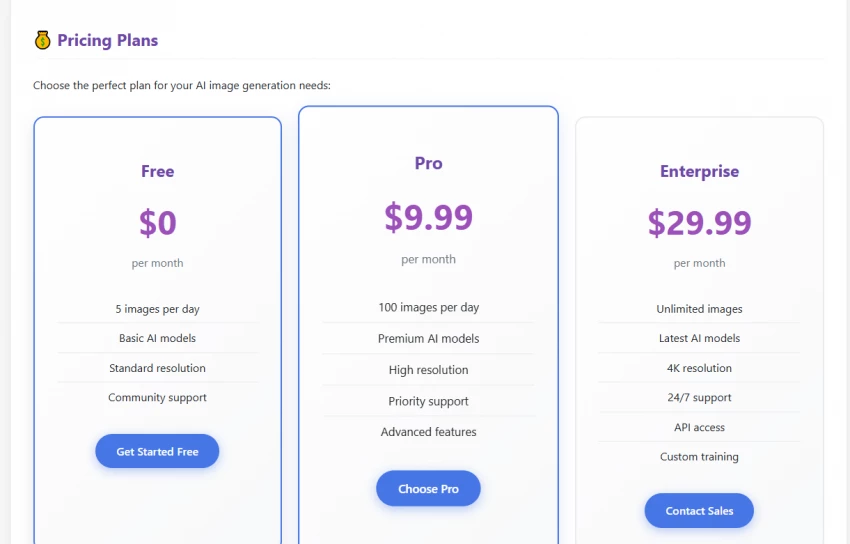

While the specifics vary by host/domain, common patterns include:

Free Tier:

● Limited number of free images.

● Watermarked outputs.

● Lower resolution or limited features.

Premium Options:

● Subscription tiers (monthly or yearly).

● Credits or “coins” for on‑demand processing.

● Access to HD/4K export, watermark removal, video length upgrades, priority queues.

For someone using it as a private adult‑fantasy or creative toy, these models can look fair and accessible. For someone evaluating ethical products, the monetization of deepfake nudity and face swap capabilities is deeply problematic.

Many high‑risk AI tools now emphasize some variation of:

● “We do not permanently store your photos.”

● “Images are encrypted and deleted shortly after processing.”

● “We use secure servers and protect your data.”

Even if such claims exist, there are key issues:

● They may not be independently audited.

● The policy language can be vague about logs, backups, or model training.

● Jurisdiction (where servers and company are based) matters for enforcement—and is often unclear.

With “nudify” and deepfake apps, the main privacy and consent risks are:

1. Non‑consensual usage

● People upload photos of others (friends, ex‑partners, colleagues, influencers) without consent.

● The resulting explicit images can be shared, weaponized, or used for blackmail.

2. Long‑term storage and leaks

● Even if the UI says images are deleted, there is no easy way for an average user to verify.

● If servers are compromised or data is mismanaged, thousands of intimate or fake‑intimate images could leak.

3. Training on user images

● Some generative platforms train models on user uploads.

● If such practices exist here, it would mean the system is learning from sensitive, explicit data tied to real people.

Globally, regulators, platforms, and even app stores are starting to crack down on AI “nudify” and deepfake tools:

● Several investigations and reports have flagged nudify apps as enablers of non‑consensual explicit imagery and harassment.

● Lawmakers in multiple countries are proposing or passing legislation targeting deepfake porn and unauthorized AI sexualization.

● App stores (and even ad networks) are under pressure to remove or restrict such tools.

Even if Muke AI or similar tools haven’t yet been banned in your region, using them on others without explicit consent can easily fall under harassment, defamation, revenge porn, or cybercrime laws depending on local statutes.

The answer depends heavily on what you do with it:

Relatively safer context: You should use it only on your own images or on fully synthetic or stock characters, strictly for private adult fantasy or artistic experimentation. Always understand that any outputs created are entirely fictional and must never be presented or implied as real.

Clearly unsafe/illegal context: Using real photos of people without their knowledge or consent is unethical and violates their privacy and rights. You must never share, publish, or use generated images to threaten, harass, or harm someone in any way. Such actions can cause serious personal and legal consequences and should be strictly avoided.

In certain contexts, Muke AI–style features can be used in ways that are consensual and ethically contained:

Consensual adult imagery:

● Partners experimenting with fantasy renders of themselves with full mutual consent.

● Individuals exploring body image, lingerie looks, or erotic art of their own likeness.

Entertainment and memes (face swap):

● Swapping faces with consent between friends for jokes and social posts.

● Cosplay previews—seeing yourself as a character or celebrity lookalike.

● Fun, clearly labeled parody content.

Generic creative work:

● Using the broader Muke AI‑type tools for marketing visuals and blog imagery that do not involve real faces or nudity.

● Generating abstract, stylized, or fictional characters.

In these cases, the main risks are still privacy (image handling) and potential leaks, but the ethical dimension is more manageable.

Unfortunately, the same capabilities can be easily abused in ways that are socially and legally toxic:

Non‑consensual deepfake porn:

● Taking photos of colleagues, classmates, influencers, or family members and undressing them with AI.

● Fabricating explicit images of people and spreading them through social media or private groups.

Blackmail and coercion:

● Generating fake nudes of someone and threatening to send them to their contacts.

● Using explicit deepfakes as leverage in abusive relationships.

Reputation damage and harassment:

● Posting fabricated nudes of public figures, activists, or ordinary people online.

● Targeted campaigns to shame or silence individuals.

Political and social manipulation:

● Using face swaps to make people appear in compromising videos.

● Blurring the line between reality and fabrication in high‑stakes contexts (elections, movements, protests).

The brutal reality: the more realistic and accessible tools like Muke AI become, the more difficult it is for victims to prove an image is fake—and the more normalized such abuse risks becoming.

On the nudify front, Muke‑style tools compete with several similar offerings that:

● Also promise one‑click clothes removal and sexy portraits.

● Offer free trials with premium upsells.

● Market themselves as “for fun” but are widely used for non‑consensual deepfakes.

In this space, the differentiators are:

● Image quality: Some tools are better at realistic skin and fewer artifacts.

● Speed: Faster tools feel more engaging and addictive.

● UI polish: Simpler, cleaner flows win users quickly.

● Safety measures: Age gates, explicit bans in TOS, content moderation, and watermarking.

The uncomfortable truth: Muke AI is part of a larger wave, not an isolated outlier. The overall category is inherently high‑risk.

Versus more mainstream face swap apps (like those for fun photo filters or entertainment), Muke AI‑style tools:

● Often push more toward the NSFW and explicit edge.

● May support higher‑resolution outputs that look more convincing.

● May lack some of the safety/consent checks that more regulated apps are beginning to adopt.

If a user simply wants memes and funny edits, safer and more regulated apps are usually a better choice than NSFW‑forward platforms.

Relative to generic AI art or marketing tools:

● The creative side of Muke AI offers overlapping features (text‑to‑image, content aid).

● However, the brand’s association with undress and deepfake porn makes it a poor fit for serious brands, agencies, or corporate environments.

● Many businesses will avoid tools that appear in the same breath as “nudify” and “deepfake nudes,” regardless of their capabilities.

For creators who care about long‑term reputation and client trust, purely creative platforms without NSFW/deepfake associations are more future‑proof.

● Advanced AI Performance: The tool delivers powerful capabilities like realistic face swaps and convincing image manipulation. It processes tasks quickly and maintains a smooth user experience, making it efficient even for complex edits.

● Easy Accessibility: With a low entry barrier, users can often access it directly through a browser without mandatory sign-up. The freemium model allows beginners to experiment and explore features without upfront cost.

● Strong Creative Potential: Beyond basic edits, the technology supports diverse creative uses such as fantasy art, cosplay previews, marketing visuals, and social media content, enabling users to produce imaginative and professional-looking outputs.

● Extremely High Abuse Potential: These tools can be misused to create non-consensual deepfake content or harassment material with very little effort. Because they are optimized to work with real people’s images, the barrier to harmful misuse is dangerously low.

● Serious Ethical Concerns: The technology can normalize digitally altering or “undressing” individuals without their consent. This undermines trust in visual media and disproportionately harms women and marginalized groups who are more often targeted.

● Legal and Reputational Risks: Depending on local laws, creating or sharing manipulated images of real people may lead to civil lawsuits or criminal charges. Individuals, brands, or creators associated with such tools may also suffer long-term reputational damage.

● Privacy Uncertainty: Users must rely on platform claims about data deletion and security without always having independent verification. Any breach, leak, or misuse of stored images could cause severe and irreversible harm to those depicted.

You might consider using it only if:

● You are experimenting privately with your own images or fully synthetic/stock‑like content.

● All participants are fully informed and explicitly consenting adults.

● You never share the content in ways that mislead or harm.

● You accept the privacy risk that comes with uploading intimate or face‑identifiable content to any third‑party server.

You should absolutely not use it if:

● You’re tempted to undress or deepfake someone without their explicit consent.

● You plan to publish or share fake explicit images of real people.

● You are underage, or you are dealing with images of minors (which is both unethical and illegal in most jurisdictions).

● You are in any professional context where brand safety and compliance matter.

Muke AI showcases cutting-edge image AI with fast, highly realistic undress and face-swap results but it operates in a high-risk space for privacy, consent, and legal misuse. While technically impressive for fully consensual experiments, its strong link to nudify and deepfake porn makes it unsuitable for most users and risky for brands or professionals.

Safer SFW face-swap and mainstream creative AI tools usually provide better value with far less risk. Unless you fully understand and accept the ethical and legal stakes, the most responsible choice is to avoid Muke AI or use it only in strictly private, fully consensual contexts.

Discussion