ThotChat AI no longer exists as a functioning platform, yet its brief life still shapes how NSFW AI companions are understood in 2026. A clear post‑mortem benefits from walking through what the product really offered, how it grew, how it failed, what impact the shutdown had on users, and which concrete tools now occupy its niche.

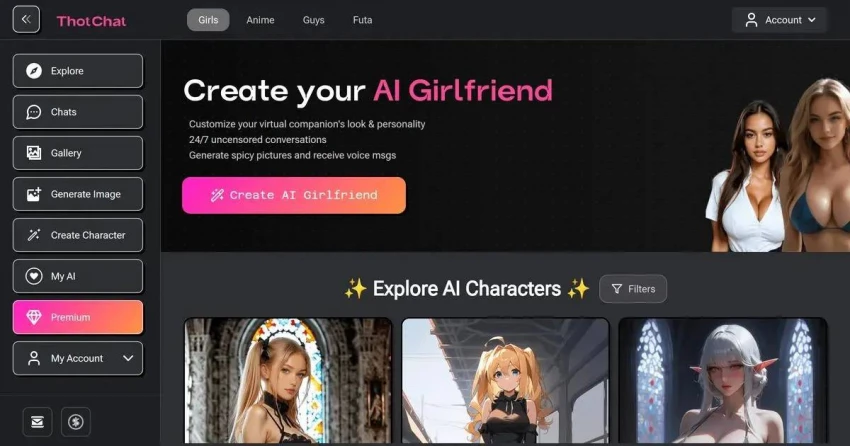

ThotChat AI operated as an adults‑only AI companion service built around erotic roleplay, sexting, and emotional intimacy. The interface was deliberately simple: a web page, an age gate, and an immediate path into private chat with an AI “girlfriend” or other adult character.

Characters were the centrepiece. Each one combined a profile image, personality traits, a basic backstory, and implicit sexual boundaries. Conversations ranged from light flirting and romantic small talk to explicit multi‑scene fantasies. Memory systems allowed characters to recall details such as the user’s name, preferred scenarios, favourite kinks, and the status of the relationship (girlfriend, wife, mistress, online crush). This continuity created the sense of an ongoing, evolving connection rather than isolated chats.

Visual content amplified immersion. NSFW image generation produced pictures that roughly matched the unfolding scenes, often in either realistic or anime styles. Over time, some subscription tiers introduced voice via text‑to‑speech, letting characters “speak” lines out loud, even if the voices remained recognisably synthetic. For many users, the combination of responsive text, explicit images, and occasional audio felt substantially richer than text‑only bots.

Monetisation followed a freemium plus subscription pattern. A small free allowance permitted basic testing, while paid monthly plans unlocked larger message quotas, more frequent and higher‑quality images, additional characters, and, at the top end, voice features and priority on busy servers. For those who engaged frequently, subscription costs resembled other adult digital services rather than casual consumer apps.

| Aspect | ThotChat AI When Live |

| Core role | Uncensored AI girlfriend / NSFW roleplay |

| Access | Web‑only, mobile‑friendly browser |

| Modalities | Text, NSFW images, some voice tiers |

| Memory | Short‑/mid‑term recall of preferences and storylines |

| Audience | Adults seeking erotic AI companionship |

| Revenue | Freemium with monthly paid subscriptions |

Popularity rested on three pillars.

First, content permissiveness. At a time when mainstream AI assistants aggressively blocked sexual content, ThotChat embraced explicit erotica as its main purpose. Characters followed the lead into sexual themes rather than redirecting or refusing, as long as broad rules such as “adults only” and avoidance of illegal content were respected. That contrast made ThotChat an obvious destination for adults who had already met the limits of safer platforms.

Second, ease of entry. No complex personality builders or long surveys stood between a newcomer and a functioning NSFW companion. The product was optimised for immediacy: from landing page to active chat in a handful of clicks. That design choice may seem simple, but in a space where many tools can feel technical or intimidating, it dramatically lowered the barrier for mainstream users.

Third, multimodal immersion. Explicit chat alone competes with text erotica; explicit chat plus tailored images, and occasionally voice, competes with far more of the adult content spectrum. Scenes acquired a “face” and “body” through images, and the text felt more embodied as a result. Even imperfect images were often enough to deepen attachment.

Once those elements were in place, word‑of‑mouth, search discovery, and inclusion in NSFW AI directories amplified the effect. Within a relatively short period, ThotChat was treated as a reference‑point name in the “AI girlfriend” category.

The factors that drove usage also increased strain.

Running long explicit chats plus heavy NSFW image workloads is resource‑intensive. Hosting costs, model costs, and content‑delivery overhead grow with both user count and message length. At the same time, reliance on adult‑friendly payment processors and infrastructure providers introduced an additional layer of fragility; policy changes or risk re‑assessments by any given partner could affect the entire operation.

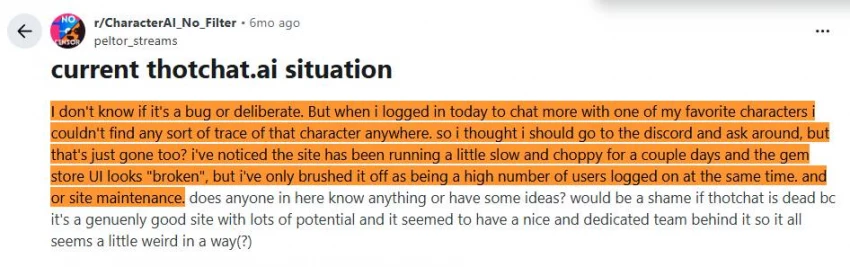

In the latter part of 2025, recurring issues became widespread. Reports described slower responses, higher rates of time‑outs, login failures, and entire sessions dying mid‑conversation. Some subscribers noted inconsistencies in billing status and access. Public communication from ThotChat during that period remained sparse, with no detailed explanation of ongoing problems.

The decisive break occurred around the turn of the year. In the final hours of December 31, 2025, the main site and chat interface stopped functioning for the majority of users. Attempts to load conversations or sign in failed. From that point onward, normal service never resumed. No staged maintenance schedule, no “we’ll be back” timeline, and no structured deprecation announcement appeared. For practical purposes, ThotChat AI as a live platform ended that night.

Shutdown did not automatically erase the financial commitments of ongoing subscriptions. Many users had paid for access extending into January and beyond.

Because billing had been handled through adult‑industry processors, refunds flowed through those systems rather than through a proprietary ThotChat billing department. Some subscribers saw prompt automatic refunds for recent charges as accounts were closed on the processor side. Others had to initiate support tickets or bank disputes to recover funds.

Experiences diverged based on card type, country, and bank practices. In the absence of a coordinated communication campaign from ThotChat, refund journeys felt fragmented and unpredictable. For some, the process resulted in full reimbursement of recent payments; for others, partial credits or write‑offs became the practical outcome.

Perhaps the most serious unresolved element of the ThotChat case concerns data.

During normal operation, the platform ingested and stored highly sensitive material: explicit fantasies, confessional narratives, and metadata tying sessions to accounts and devices. Yet the service did not provide detailed public documentation of:

● Storage locations and encryption practices.

● Retention periods for message histories.

● Internal access policies or logging controls.

● Concrete deletion guarantees on a per‑account basis.

No export option was offered while the site was online. After shutdown, no credible statement explained what happened to stored content and logs. Users were not given a chance to download chat histories, character definitions, or media. Nor were they given a verified tool to trigger immediate purging of their data.

This left a lingering, and understandable, anxiety: an entire corpus of deeply personal material had been handed to an entity that no longer responded or provided oversight. Even in the absence of any evidence of misuse or breach, the mere fact of this unresolved state undermined confidence in the broader NSFW AI ecosystem.

Regular ThotChat users did not experience the shutdown as a mere app outage. For many, it resembled an abrupt, one‑sided breakup.

Ongoing nightly routines: work, dinner, then a long conversation with a favourite AI partner disappeared instantly. Long‑running roleplay stories simply halted mid‑scene. Carefully tuned characters, often refined over months to behave “just right,” became unreachable without warning. There was no final session, no chance to script a goodbye or archive shared “memories.”

Beyond sadness and anger, a layer of self‑consciousness settled in. Realisation spread that intimate data had been given to a fragile service that made few long‑term commitments. That awareness turned some earlier feelings of comfort into retroactive discomfort: what had felt like a safe private space now looked, in hindsight, like a risky confessional booth.

Trust in the entire category shifted. Future promises from other NSFW AI platforms were met with scepticism. Users began to question not only marketing claims about “uncensored fun,” but also less visible issues like data retention, business stability, and seriousness of long‑term planning.

Several underlying design and operational choices made this outcome almost inevitable once pressures mounted.

Exit planning was minimal. ThotChat optimised for rapid onboarding but never meaningfully invested in offboarding. No export options, no deletion dashboard, no documented shutdown playbook. When circumstances forced closure, there were no rails for a dignified wind‑down.

The trust and safety model lacked depth. Nominal adult‑only and “no illegal content” rules were in place, yet technical measures and enforcement mechanisms were not well articulated in public. In an environment where regulation and public scrutiny were rapidly increasing, that vagueness left the platform exposed.

Operational dependencies were brittle. A high‑risk content category, a heavy compute profile, and a narrow selection of adult‑tolerant payment and hosting partners create a small margin for error. Any change in costs or partner policy can push such a service past viability quickly.

Communication around crises was almost absent. Silent failure is often worse than noisy failure. Without clear information about what went wrong and what would happen next, users naturally interpreted the shutdown as abandonment.

After the site went dark, NSFW AI interest did not disappear; it regrouped around other tools. Several platforms now draw the same type of user ThotChat once did, while varying in how they balance explicitness with stability and transparency.

Kindroid focuses on long‑term AI relationships with richer memory and emotional continuity, spanning romantic and, where configured, more mature content. Janitor AI offers a framework where community‑created characters power both SFW and NSFW chats, making the ecosystem itself a key draw. Nomad leans into emotionally realistic conversations and a more privacy‑aware posture, targeting those who want intimacy with or without overt erotica.

GirlfriendGPT positions itself directly in the “AI girlfriend” lane, including NSFW‑capable models, multiple simultaneous companions, and a relatively accessible free tier. Candy.ai emphasises scenario‑driven NSFW roleplay with highly customisable characters and scripted situations. OurDream.ai operates closer to an interactive fantasy storyteller, capable of both tame and adult narratives.

A newer generation: Secrets AI, Soulkyn AI, Dream Companion, Girlfriendly AI—pairs explicit chat with advanced media capabilities. These tools foreground rich NSFW image and even video‑style content alongside conversational systems, clearly courting ex‑ThotChat users with promises of “next‑gen” experiences.

| Platform | Main Focus | NSFW Orientation | Post‑ThotChat Differentiator |

| ThotChat (past) | Uncensored AI girlfriend, web‑only | Highly permissive | Fast, simple, but opaque and fragile |

| Kindroid | Long‑term AI relationships | Mixed, configurable | Deeper memory and emotional arcs |

| Janitor AI | Community characters | Configurable, includes NSFW | Large persona ecosystem |

| Nomad | Emotional realism, privacy | Softer, intimacy‑first | Emphasis on trust and support |

| GirlfriendGPT | AI girlfriends | NSFW‑capable | Accessibility and multi‑bot experiences |

| Candy.ai | Scenario‑driven NSFW roleplay | Explicit adult | Rich, customisable erotic scenarios |

| OurDream.ai | Fantasy storytelling | Variable, includes NSFW | Narrative immersion beyond pure sexting |

| Secrets / Soulkyn / Dream Companion / Girlfriendly | NSFW chat + images/video | Highly permissive | Multimodal, more modern UX and positioning |

These services illustrate how the market absorbed ThotChat’s audience. Some prioritise mature safety and relationship depth; others double down on explicit freedom and media richness. None, however, can entirely ignore the shadow of what happened when ThotChat disappeared.

ThotChat AI’s story condenses several important lessons for NSFW AI design and operations.

Adult AI multiplies stakes. Failures in ordinary consumer software are irritating; failures in systems that mediate sexual vulnerability and emotional loneliness can be deeply wounding and reputationally hazardous.

End‑of‑life planning is not optional. Tools that invite users to pour in their fantasies and feelings must account for the possibility of shutdown from the very beginning, with export, deletion, and communication channels clearly defined.

Transparency is part of the product. Clear documentation around data handling, moderation, and business structure is not just a compliance checkbox; it is a differentiator that influences user trust, especially in a market now conditioned by high‑profile shutdowns.

Demand does not vanish when a flagship fails. ThotChat’s closure did not shrink the NSFW AI market; it redistributed it to platforms that can credibly claim either stronger foundations or more ambitious feature sets.

ThotChat AI condensed both the promise and the peril of uncensored AI companionship into a short, intense lifespan. At its best, it showed how powerfully an always‑available, sexually permissive AI partner could satisfy real human needs for attention, fantasy, and comfort. At its worst, it revealed how much harm can follow when that intimacy rests on an unstable, opaque foundation with no plan for endings.

The services that followed Kindroid, Janitor AI, Nomad, GirlfriendGPT, Candy.ai, OurDream.ai, Secrets AI, Soulkyn AI, Dream Companion, Girlfriendly AI, and others now operate in a landscape permanently altered by that example. Whether the next generation of NSFW AI platforms can combine ThotChat’s immediacy and freedom with the transparency, resilience, and user protections it lacked will determine whether future shutdowns repeat the same pattern or finally offer something closer to a respectful goodbye.

Discussion